What is SSO?

Single sign-on (SSO)is an identity authentication process that permits an identity to enter one identity name and password in order to get access multiple applications. The process authenticates the identity(a user or device or an app) for all the applications they have been given(authz) rights to and eliminates further prompts when they switch applications during a particular session.

There are multiple ways to implement SSO solution in an enterprise infrastructure, discussion about various forms of SSO is outside of the scope of this article. Splunk employs a proxy based SSO solution which is bit different from the traditional web cookies based SSO, it does not leverage any standards based protocols such as SAML to implement its SSO solution.

Splunkweb SSO:

- Not a password reset mechanism

- Not a replacement for an identity management solution

- Does not change/add any data in to your LDAP infrastructure, All it requires is read-only access to your identity data.

- Splunk CLI cannot participate in this SSO

- Splunk configuration management port does not rely on cookies hence it cannot benefit from the SSO. This means invoking https://localhost:8089 will still require authentication regardless of the existence of SSO.

Pre-requisites

The goal of Splunkweb SSO configuration is to delegate the Splunkweb authentication to the customer’s centralized IT authentication systems. Splunk itself does not implement any SSO solution by means of leveraging industry standards based protocols. To establish a splunkweb session it relies on a trusted identity passed (typically set and sent by a Proxy) on to Splunkweb as an HTTP request header by the enterprise’s authentication systems. Note that there is no cookies involved here to establish the trust between the IT system and Splunk, meaning intermediaries like proxies are not required to set any domain cookie and forward them to Splunkweb.

In order to make this proxy based SSO work, following pre-requisites must be met for the scenario that I have tested. There are other ways to implement this solution such as having a IAM product set the header with required identity, from then on the process is same as I have described in this article.

- A Proxy Server (typically IIS or Apache, for this exercise Apache/2.2.15 used)

- Apache Server Configured as reverse proxy with mod_proxy_http.so and mod_ldap.so enabled

- A LDAP Server provisioned with appropriate groups and users.

- No Authz configured, Authz will be done by splunkd

- A working Splunk system

How it works?

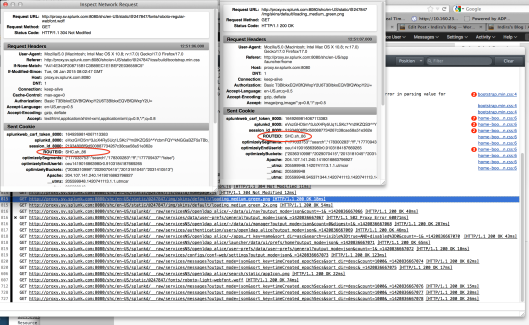

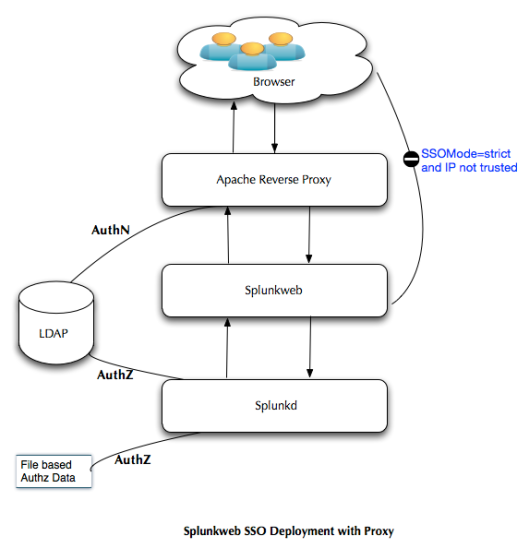

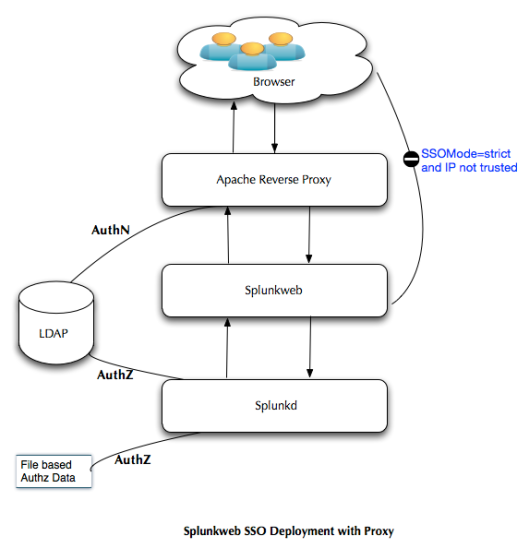

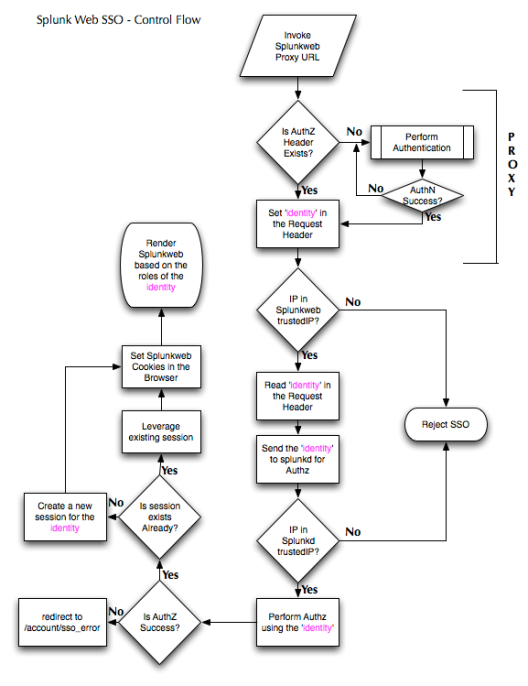

Splunk administrators and users invoke the splunkweb via the proxy URL that is deployed in front of the splunkweb as shown in the Figure 1. The proxy is configured to authenticate the incoming request , upon successful authentication it will set the request header with the authenticated identity’s attribute(could be uid or whatever you want to pass it on). If Basic authentication is used(in this exercise BASIC authentication employed) then the authorization header is also set in the browser. Once this header is available subsequent access to the proxy URL will not seek for authentication again, it will keep reusing this authz header. Only way is to close the browser to clear the authorization header consequently to force the authentication again.

The proxy server makes a request to splunkweb along with the request header, for this exercise let it be X-Remote-User, remember the request headers are case-sensitive. Splunkweb is configured for SSO and it knows where to look for the identity information(the web.conf property remoteUser = X-Remote-User ) along with the check for the incoming IP address in the trustedIP list, again this is configured in the web.conf for the property trustedIP. If the incoming client IP is in the trustedIP list of the splunkweb configuration, then it proceeds by making a request for authz for the given identity in the request header.

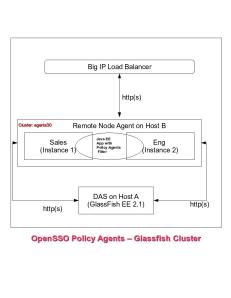

Splunkweb SSO Deployment – Figure 1

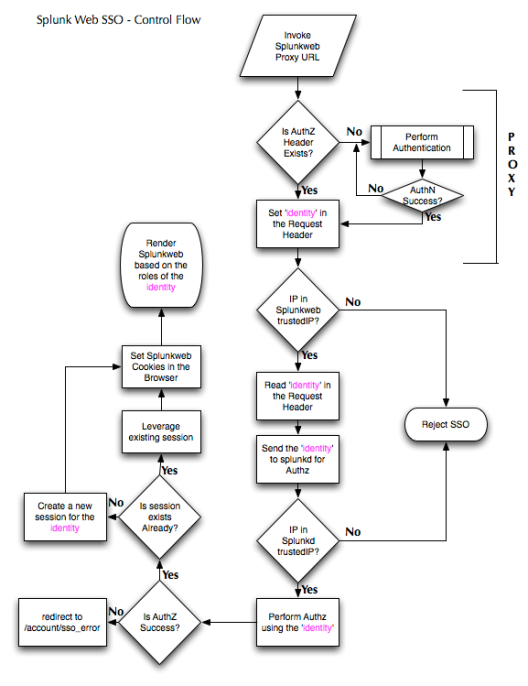

At this point, upon receiving the request from splunkweb, splunkd verifies whether the incoming IP address matches the value of trustedIP property of the server.conf, this is a single valued property unlike the trustedIP property in web.conf which multi valued. If the IP is trusted then it will initiate the authorization process for the given identity in the request header(value of X-Remote-User). In both cases if the incoming IP address is not in the trustedIP list then the SSO will be rejected as shown in the Figure 2.

This authorization is performed at two stages as shown in the Figure 1.

File based authorization aka. Splunk authorization

In this case the splunkd checks if the given identity matches any of the splunk role(s) that are stored in the file system. if it found a match then the authorization process is immediately terminated even though there are other LDAP authorization strategies are configured in the system. It goes on creating a new session for the authorized identity provided no session already exist for the identity in the request header. If a session already exists it just uses that session identifier and based on that creates the necessary cookies for splunkweb’s consumption, Once the cookies are present, the splunkweb resumes its flow as if it had authenticated in a non-SSO fashion. Any subsequent access to splunk via the proxy URL does not require re-authentication as long as the request header contains the trusted identity. Even if the splunkd session times out or destroyed for whatever reason, the BASIC auth authorization header along with the request header value, the splunkd will seamlessly create the session for the identity by repeating the authz process described earlier. The trustedIP property holds good for ever so a check for client IP match against these IP addresses will always be enforced, failing to match the trusted IP will result in SSO failure(strict mode only). Splunkd always performs authorization against its file based identity data irrespective of whether a LDAP strategy configured or not. This is a mandatory authorization process that cannot be disabled by means of a configuration tweak.

LDAP based Authorization

What happens if the Splunk Authz fails to find a match in its local identity database?, Well, when this happens it examines the configuration to determine if there are any LDAP strategy configured and enabled, if there is one then it will try to find a matching splunk role/LDAP group mapping for the identity, if a match found then it returns expeditiously, otherwise it iterates through all the enabled LDAP strategies until it finds a match or it exhausted with all the active LDAP strategies, implying no authorization match found. In this case it redirects the browser to /account/sso_error page as shown in the Figure 2.

LDAP based authorization is an optional step that only kicks off when there is no match found in the splunk’s native identity database. If no LDAP strategies are configured or enabled then there will not be a LDAP based authorization performed.

The entire control flow of how the single sign on process works is depicted below in the Figure 2.

Splunkweb SSO control Flow – Figure 2

Properties that arbitrate SSO behavior

There are number of properties that needs to be set in order to orchestrate the single sign on process. These properties emanates from splunk web’s web.conf and server.conf for splunk daemon. In this section let me unravel the merits of setting or not setting these properties. All the properties that are proxy specific deferred to the later section as they do not fall under the purview of Splunk.

Properties Specific to Splunkweb

Properties discussed in this section are pertaining to the Splunk’s http web server, apparently most of the SSO properties affect the splunkweb as the SSO is about the splunkweb not Splunk daemon or Splunk command line interface.

The following properties in web.conf (SPLUNK_HOME/etc/system/local) needs to be configured

This property determine the authenticated identity’s attribute that is passed by the proxy server via the HTTP request header. In this exercise it is named X-Remote-User. Typically it would be the RDN of the identity if stored in a LDAP server. Nevertheless any LDAP attribute can be passed via this request header as long as the proxy sets this attribute properly after authentication. Be mindful about the use of request headers instead of response headers. If you configure your proxy to set response header by using Header directive, then the SSO will not happen as Splunk will only read request headers to obtain the trusted identity’s attribute. You must use RequestHeader directive in your proxy configuration to pass the identity’s attribute to Splunk. Splunk defaults this value to REMOTE_USER.

Splunkweb SSO can operate in two modes, both giving certain level of security to protect the Splunkweb resources. All the resources in the splunkweb that can be accessed anonymously will not be impacted by this property value. As of this writing the default value is permissive. If this property is not set then it defaults to permissive mode as well. This property can take the following two values:

This is the most hardened mode, Splunk recommends customers to deploy in this mode. With this mode in place, any access to the splunkweb resources will be allowed only to the client IP addresses listed in trustedIP property(see below). All the requests that are not originating from those trustedIP list will summarily be rejected by splunkweb. Whether the request is made via proxy URL or directly by using the splunk host/IP address, request will be rejected if the client’s IP address is not listed in the trustedIP property. It is very important to set the tools.proxy.on = True to enable Splunkweb to get the client IP address instead of proxy’s IP address, if you are using a reverse proxy as I did for this exercise.

This mode behaves exactly same as above except the fact that the requests will be served by the splunkweb by directly hitting the splunkweb url, not via the reverse proxy server(as opposed to the “strict” mode, where it is not possible to login even via splunkweb direct url). even though the client’s originating IP address is not in the trustedIP list. This mode is meant for testing and troubleshooting scenarios. It is susceptible to cookie hijack attacks. Use this mode at your discretion.

This property is a multivalued list that contains the list of client IP addresses. Splunkweb will serve only the requests originating from these clients, any requests that is not coming from these IP addresses will be rejected if SSOMode=strict. In the permissive mode splunkweb will continue to serve all the requests as long as authentication and authorizations are proper. Typically this address will be set to the proxy’s IP address unless tools.proxy.on = True is set.

It is a boolean property takes either True or a False value. Out of the box this property is not set. In a reverse proxy scenario if you want authorize based on the browser’s IP address then set this property to a True value. A False value will authorize based on the proxy’s IP address.

This property defines the context for the splunk web, by default it is same as root context of the proxy and splunk app server. Customers can use this property to redefine the root context of the web/app server to some thing else. For instance

root_endpoint=/lzone

in the web.conf file under settings stanza. With this settings splunkweb will be accessed via http://splunk.example.com:8000/lzone instead of http://splunk.example.com:8000/ . To make the proxy aware of this, you have to map it accordingly in the httpd.conf. Some thing like

ProxyPass /lzone http://splunkweb.splunk.com:8000/lzone

ProxyPassReverse /lzone http://splunkweb.splunk.com:8000/lzone

This concludes the relevant properties that needs to be configured in the web.conf.

Properties Specific to Splunk daemon

There is only one property that is pertinent to the splunk daemon, which is trustedIP. This needs to be set in the server.conf under general stanza.

This property plays the key role in determining whether SSO is enabled or not in a Splunk deployment. If this property is not set then the SSO for the splunk will not be enabled. It is a single valued property unlike its splunkweb counter part. Typically it is almost always the value of IP address of the Splunkweb’s host.

That concludes the “How it works” section of this article. In the next few sections I am going to walk you through an exercise of configuring SSO for Splunkweb using Apache proxy server.

Test Servers

WARNING:

It is highly recommended any HTTP header based solutions must be implemented over a TLS/SSL enabled deployment. In my testing I am just defaulting to open mode for academic testing purposes. Customers MUST not deploy this solution sans securing their transport layer.

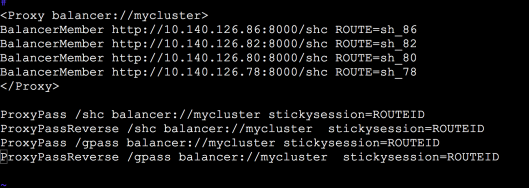

Configuring Apache Proxy

There are a lot of documents in the internet on how to configure the Apache mod_proxy server. In my testing I have used a Apache server(2.x on Linux) with mod_proxy and mod_ldap enabled to avail proxy and LDAP authentication.

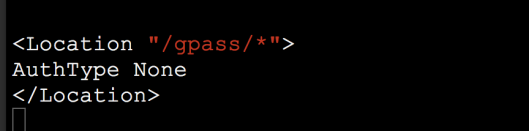

Here are the three critical configurations that needs to be performed in the httpd.conf of the Apache server.

- Setup proxy URL for Splunkweb

It is a very straightforward process to configure the Apache server in to a reverse proxy. Here is the relevant part of the httpd.conf that configure the proxy for splunkweb, this can appear under your virtual server instance or at the global level.

ProxyRequests Off

ProxyPassInterpolateEnv On

ProxyPass / http://10.3.1.61:8000/

ProxyPassReverse / http://10.3.1.61:8000/

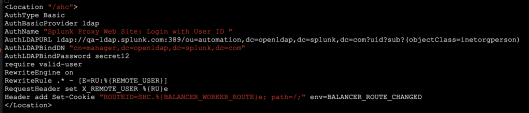

This is the key step in the SSO configuration as Splunk off loads the authentication process to the proxy, hence it is a required step to authenticate the incoming connection in order to set the request header with authenticated identity’s attribute. In this exercise I have used the OpenDS server to perform the user authentication. The configuration looks pretty trivial if the mod_ldap is enabled. The following configuration text should appear between <Location “/”> and </Location>

AuthType Basic

AuthBasicProvider ldap

AuthName "Splunk Proxy Web Site: Login with User ID "

AuthLDAPURL ldap://10.3.1.61:1389/ou=people,O=Splunk Inc.,L=San Francisco,c=US?cn?sub?(objectClass=inetorgperson)

AuthLDAPBindDN "cn=directory manager"

AuthLDAPBindPassword mysecret

require valid-user

- Setup Request Header to pass the identity information

Once the authentication is successful, the proxy server supposed to set the request header that will be consumed by the Splunkweb to perform the SSO integration. You can set any value in the header as long as your splunkweb can make sense out of it to perform the authorization. Typically it will be set to the value of the LDAP strategy’s user naming attribute for eg: value of the uid. Here is how it is configured in the httpd.conf, The following configuration text should appear between <Location “/”> and </Location>

RewriteEngine on

RewriteRule .* - [E=RU:%{REMOTE_USER}]

RequestHeader set X_REMOTE_USER %{RU}e

This complete the configuration portion in the apache server. There is a subtle point to note here, even though the request header name is set as X_REMOTE_USER, but at the receiving end it is showing up as X-Remote-User so be aware of this and set the remoteUser property accordingly in the web.conf.

Configuring Splunkweb properties

We have discussed about the functionality of these properties at length earlier, here is the configuration that needs to go in the web.conf under the [settings] stanza.

SSOMode = strict

trustedIP = 127.0.0.1,10.3.1.61,10.1.8.81

remoteUser = X-Remote-User

tools.proxy.on = True

Configuring Splunkd properties

There is only one property in the splunk daemon trustedIP that is set in the server.conf under [settings] stanza with the following value:

trustedIP=127.0.0.1

Creating LDAP strategy for Authorization

As we have seen in the earlier section, Splunk daemon does the authorization before creating a web session(cookies) for the identity supplied via the request header(X-Remote-User). Using Splunk’s identity database for authz is trivial hence let us plan to use the same LDAP server used for authentication by the proxy server, for authorization as well. To make this happen you have to create a LDAP strategy in the Splunk using the same LDAP server used for authentication. Here is the REST command that would create the LDAP strategy for you.

curl -k -u admin:changeme -d "name=locaOpenDS" --data-urlencode "bindDN=cn=directory manager" \ -d "bindDNpassword =secret12" --data-urlencode "groupBaseDN=O=Splunk Inc.,L=San Francisco,c=US" \ -d "groupMappingAttribute=dn" -d "groupMemberAttribute=member" -d "groupNameAttribute=cn" -d "host=10.3.1.61" \ -d "port=1389" -d "realNameAttribute=cn" --data-urlencode "userBaseDN=O=Splunk Inc.,L=San Francisco,c=US" \ -d "userNameAttribute=cn" "https://localhost:8089/services/authentication/providers/LDAP"

That is it, we are done with the necessary configurations required to integrate splunk web in to the enterprise IT infrastructure, there by delegating the authentication to the proxy server. In the next section let us trace the behaviour of SSO in realtime and learn how to trouble shoot the setup if not working.

Accessing the Splunkweb Via Proxy

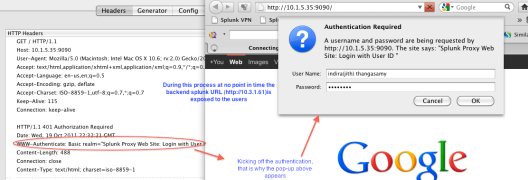

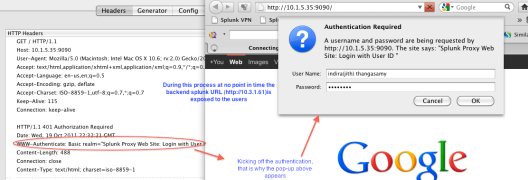

Open a New browser and enter http://10.1.5.35:9090 in the address bar, you will be prompted for authentication, because the proxy is set to request for authentication for the root of the URL by specifying front slash (/) . After a successful authentication the request header will be set based on that, splunkweb will create a web session for the authenticated identity “indira(jith) thangasamy” after performing all the song and dance for authz. Here is a screen shot of proxy requesting for authentication up on trying to access splunkweb URL.

Invoke the Splunkweb via Proxy URL – Figure 3

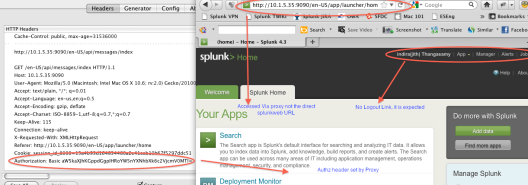

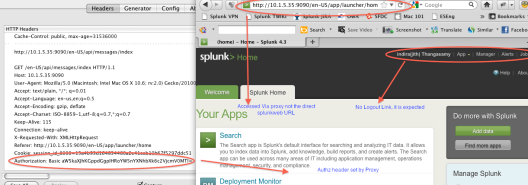

In the next page(Figure 4), the splunkweb is rendered after going through the authorization process as we discussed in the foregoing section. The identity “indira(jith) thangasamy” is appropriately provisioned in the LDAP and mapped to a LDAP group in-turn that is mapped to a Splunk role. This part is not shown here.

Logout

As you can see the logout link is missing in Figure 4, this is intentional, for that matter there no single logout functionality yet provided. Because the HTTP basic Authorization header is still present along with the request header X-Remote-User with a valid value, any subsequent access using the same browser will seamlessly create a session for the identity that is present in the request header. A Splunk logout link will only clear the browser cookies not the authorization header set by HTTP basic proxy auth or the request header set by the proxy. If you access the Splunkweb by hitting the server directly then the existence of these headers will be immaterial. The only way is to close the browser to clear the HTTP basic authz header(only if your proxy employed BASIC auth scheme, there are other scheme customers could resort to to stay away from this issue) , even then the splunk session will not be destroyed for this user, one way is to use the REST end point along with the session identifier to destroy the session, like the one shown below:

curl -s -uadmin:changeme -k -X DELETE https://localhost:8089/services/authentication/httpauth-tokens/990cb3e61414376554a39e390471fff0

SplunkWeb SSO – Success – Figure 4

If you do not destroy the session, eventually it will be destroyed after reaching its time out value.

Troubleshooting

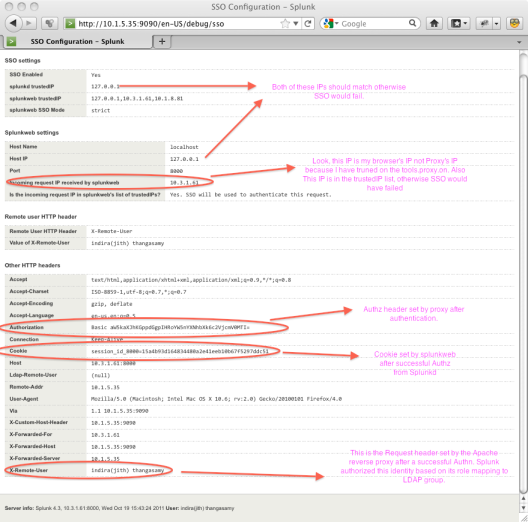

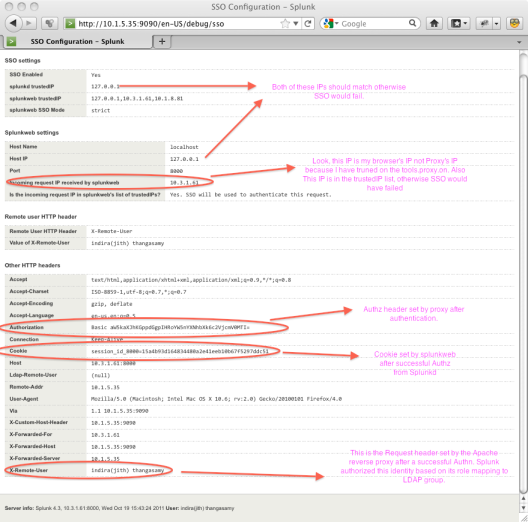

Splunkweb offers a great interface that would give out the environment and the run time data to enable the administrators to debug the deployment. This page can be accessed via the proxy or the direct URL using the the relative URL /debug/sso as shown in Figure 5. The request headers will not be available if you access this page directly with out going through the proxy server.

Troubleshooting with /debug/sso – Figure 5

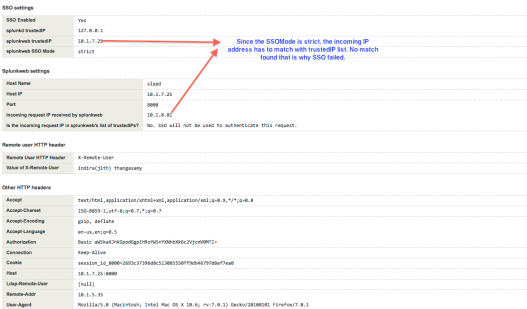

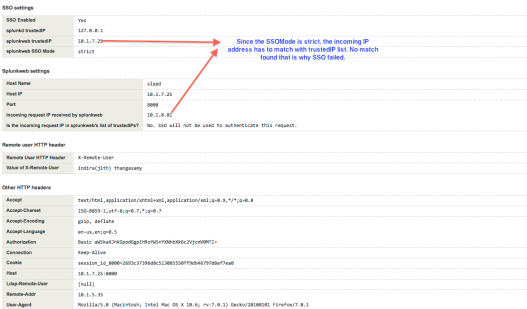

Let us look at one of the failure scenario, in this case we are accessing the splunkweb via proxy from a client whose IP address is not in the splunkweb’s trustedIP list. The SSO will fail, as you can see from the Figure 7, the client IP address does not match.

SSO Failure Scenario in strict mode – Figure 6

When you access the proxy URL from a untrusted client, you would see an error message as shown in the Figure 6. In this scenario the SSOMode is set to strict in the configuration.

SSO Failure Scenario debug – Figure 7

Conclusion

Splunk SSO is a misnomer for Splunkweb SSO, It is only the web interface of Splunk GUI is enabled to work seamlessly when the authentication delegated to an external entity. Nevertheless this is one of the paramount feature that enables customers to integrate the authentication and authorization of Splunkweb in to their existing infrastructure there by reducing the TCO of Splunk for our customers. Besides SSO also improves the usability of the product and complements the existing security infrastructure by seamlessly integrating itself without requiring for additional special accounts/privileges to be provisioned.